Your SSH Key is Obscurity

I've heard several times, "security through obscurity is no security at all". You've probably heard the same if you've ever studied or worked in cybersecurity. It's often used when a junior suggests some simple change to enhance security, like changing the port an SSH daemon listens on. A senior may respond with "security through obscurity is no security at all", implying that that change won't actually give any benefit, and the simplest solution (keeping the default port) is best.

If you've never thought twice about that statement, I love to break it to you: it's totally wrong.

Prevention through obscurity

Security through obscurity is not only essential, it makes up almost all preventative measures in cybersecurity. SSH keys, OS logins, disk encryption, secure boot, TOTP, etc. are all 100% security through obscurity. Any key that grants access to a system is one permutation of a finite set of bits. Gaining unauthorized access is merely a matter of finding the correct combination. As the old saying goes, "the best way to hack into something is to enter the correct password."

I recognize that IT pros who denigrate "security through obscurity" are usually talking about architectural obscurity, rather than cryptographic. However, I would argue that cryptographic and architectural obscurity are the same kind of thing, because they both force an attacker to search a larger possibility space. Changing an SSH port adds a small degree of obscurity, while using a 256-bit key adds an astronomical amount. The phrase "security through obscurity" treats them as categorically different, when they are not.

For example: any sane IT professional would recognize that leaking the requirements of a password system leads to reduced security. If would-be hackers know that your passwords require a capital letter and a number and will be 6-20 characters, they have a smaller number of passwords to try in order to gain access.

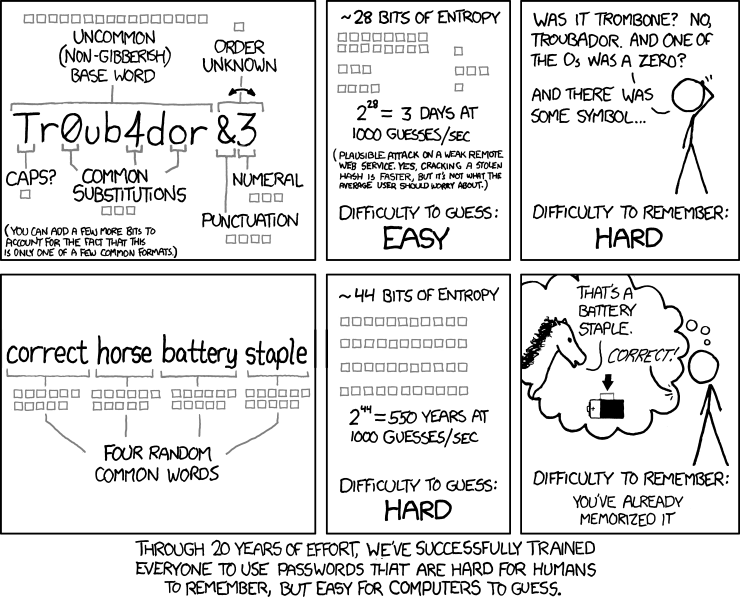

The thing that IT pros don't say outright is: the decrease in security is precisely because of a decrease in architectural obscurity. In this case, the obscurity is the size of the possibility space of the password system, also called entropy. This XKCD comic is helpful to think through the idea, if it's new to you.

So, the "base layer" of selective access prevention is built on obscurity. But what about responsive systems? They don't rely on obscurity, right?

Response enabled by obscurity

Many types of cybersecurity responses, from Fail2Ban-style blocking to self-destructing files or systems, depend on a failed authentication event to be triggered. Failed authentication requires at least enough obscurity to reach the threshold for a response. However, even a very low failure threshold does not fundamentally change the fact that the authentication system relies on obscurity.

Let's do a thought experiment. We're going to design a networked login system. This system has to be very secure, so we will permanently ban the IP address of any client who fails a single attempt. However, the employees at this company are bad at remembering passwords, so we're going to only use 4-digit pins for authentication.

Does this system sound secure? It turns out that IP addresses are relatively easy to obtain, and this system would be compromised in a matter of hours. Even in a magical world where people could not obtain new IP addresses, this system could easily be cracked by 10,000 determined friends.

The system is insecure because the "key" in this case has a relatively small amount of obscurity. Even the overzealous response of automatically banning clients with one failed attempt did not significantly improve the situation. The system requires a certain threshold of obscurity to be secure, no matter how strict the lockout threshold.

This is a bit of an extreme example, but, from an attacker's perspective, it is still fundamentally the same type of problem as a login which requires an Ed25519 key. The only difference is the amount of obscurity: 4 decimals vs 32 bytes.

To call back to the example in the intro: a responsive system would benefit from the changed SSH port by being given the opportunity to ban clients who scan ports. A host using the default port may miss that chance, because attackers can simply start trying to authenticate on port 22 without scanning at all.

Don't get me wrong, I recognize that automated responses to attacks vastly improve the security of a system. However, the statement "security through obscurity is no security at all" would imply that a good system can be secure with no obscurity, and that's simply not the case.

Non-obscure security

There are instances of security that are not reliant on obscurity, such as airgapped systems or network isolation controls. However, these are architectural decisions that are outside of most day-to-day security applications, which is where the phrase "security through obscurity" often gets misapplied.

Conclusion

So, is the junior who wants to change the SSH port right, or the senior who recognized it didn't benefit them?

I think both were, to a degree. The junior recognized that changing the port would mean that hackers would need to take the extra step of scanning all the ports on a server before gaining access, and that that would improve security at least a little.

However, the senior understood that the change didn't benefit them significantly. If I were the senior, I may have simply stated that the effort or additional complexity outweighed the security benefit for their organization. Saying "security through obscurity is no security at all" may lead juniors to a false understanding of what cybersecurity is, in two ways. First, they may think that there's some magic in cryptography that makes it not just obscurity, but something more. Second, they may think that architectural obscurity has no value whatsoever, when it demonstrably does.

Denigrating "security through obscurity" is just bad communication. To foster growth and honest communication in computing, it's time to let this phrase rest.